The five levers of AI performance

INSIGHTSAI ADOPTION

2/2/20263 min read

The five levers of AI performance

A framework for diagnosing why adoption stalls, and where to intervene

When AI adoption isn't working, the instinct is usually to add more training. More tutorials. More "here's what the tool can do" sessions.

It rarely helps.

Not because training is useless, but because it assumes the problem is capability. In our experience, capability is only one of five places where adoption gets stuck, and it's often not the first one that needs attention.

This piece explains the framework we use to diagnose AI adoption friction: five levers that determine whether people use AI tools confidently, consistently, and well.

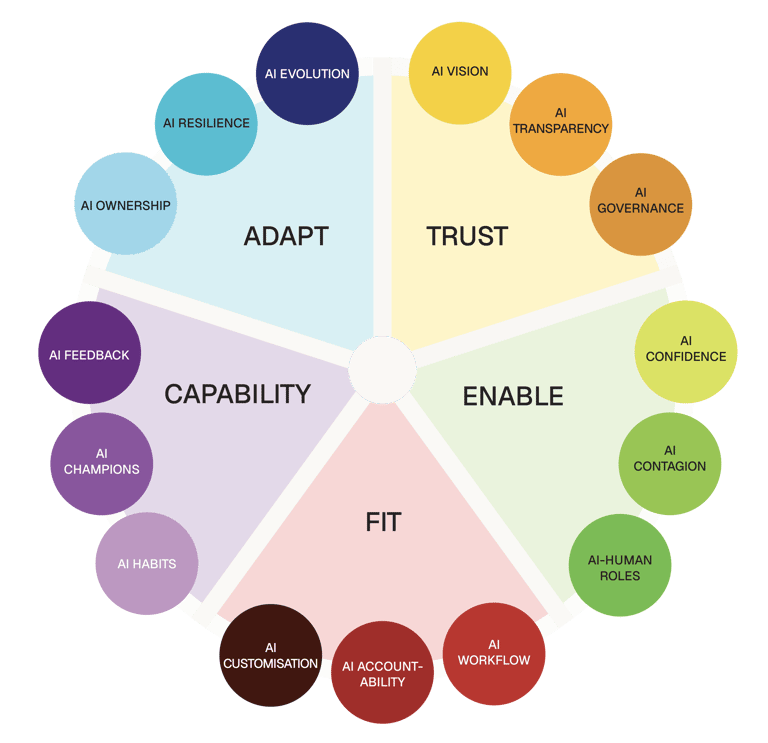

The five levers

When we mapped friction points across organisations implementing AI—finance, health, consulting, government, tech—we found they clustered into five categories. Each represents a lever: a point where behaviour tips toward confident use or away from it.

Trust

Does the team believe the AI is reliable, transparent, and working in their interest?

Trust problems show up as hesitation, workarounds, or over-reliance that collapses at the first error. Common patterns:

People take AI outputs as-is without evaluating them, until something visibly fails

Confidence craters after a single bad experience and doesn't recover

Teams don't know what happens to their data, so they avoid the tools or use them minimally

There's no shared story about why AI matters here, people hear "efficiency" but suspect "headcount"

Trust interventions include: transparent communication about data use, leadership modelling appropriate AI use, building in moments where AI gets things wrong safely so people learn to evaluate.

Enable

Can people access and use the tools without friction?

Enable problems show up as low uptake despite good intentions. The will is there; the way isn't. Common patterns:

Tools require too many steps to access, so people revert to old methods

Day one is confusing, and people don't come back

IT or compliance requirements create barriers that feel disproportionate to the benefit

The tools work differently on different devices or contexts, creating inconsistency

Enable interventions include: reducing steps to first use, fixing day-one experience, making access consistent across contexts, removing friction that doesn't add value.

Fit

Do people know which tasks AI should touch, and which it shouldn't?

Fit problems show up as either over-reliance or avoidance. People aren't sure when AI is appropriate, so they default to using it for everything or nothing. Common patterns:

People use AI for tasks where it's risky and avoid it for tasks where it would help

There's no shared understanding of where AI adds value versus where human judgement is essential

High-stakes decisions are being informed by AI without appropriate oversight

Teams are reinventing the wheel. Everyone figuring out fit individually

Fit interventions include: mapping which tasks benefit from AI and which don't, creating shared guidance on appropriate use, building in checkpoints for high-stakes decisions.

Capability

Can people use AI well, not just often?

Capability problems show up as poor-quality AI use despite access and intent. People are using the tools but not getting value. Common patterns:

Prompts are vague, so outputs are generic

People accept first outputs without iteration or evaluation

Nobody knows how to override or adjust when something isn't right

AI-assisted work looks polished but lacks substance

Capability interventions include: skill-building on prompting, evaluation, and iteration; sharing examples of good AI use; creating feedback loops so people learn from what works.

Adapt

Can the team keep up as tools evolve and risks shift?

Adapt problems show up as behaviours that used to work becoming counterproductive. The context changed; the habits didn't. Common patterns:

A tool update changed how something works, but people are still using old patterns

New risks have emerged (regulatory, reputational) but practices haven't adjusted

Team composition changed and newcomers weren't onboarded to current norms

What worked in a pilot doesn't work at scale

Adapt interventions include: building in regular reviews of AI practices, creating mechanisms to surface when conditions have shifted, updating guidance when tools or contexts change.

Using the framework

The levers aren't a sequence. You don't have to "fix Trust before working on Capability." They're diagnostic: a way to figure out where the friction actually is, so you can intervene in the right place.

In practice:

If uptake is low despite training → probably not Capability. Check Enable (can people access it easily?) and Trust (do they believe it's worth it?).

If people are using AI but quality is poor → probably Capability or Fit. Are they skilled enough to use it well? Do they know when to use it?

If things worked for a while then stalled → probably Adapt. What changed? Tool update? Team change? New risks?

If there's anxiety or resistance → probably Trust. What are people worried about? Job security? Data? Being judged?

The diagnostic we built asks fifteen questions that map to these levers. At the end, you know which lever is most stuck—and you can pick interventions that address that specific friction, rather than defaulting to "more training."

The levers in action

We developed this framework through the Human-AI Performance project with Infosys Consulting. The diagnostic, methods, and toolkit all connect to these five levers.

For the case study, see Infosys Consulting → Human-AI Performance.

For the research behind the framework, see Human-AI Performance Trends 2025.

This thinking emerged from the research behind Human-AI Performance, our AI adoption system built with Infosys Consulting. For the full research findings, see Human-AI Performance Trends 2025.

© 2026, BehaviourStudio All rights reserved. Behaviour Thinking is a registered trademark of BehaviourStudio.