Training that survives

D&AD

WORK

Lauren Kelly

Training that survives

SNAPSHOT

D&AD had something most programmes spend years trying to get right: sessions people genuinely enjoyed, led by trainers who were top of their craft.

The drop-off came afterwards, when the calendar filled up and the learning had to compete with real work. That’s also where delivery started to drift as more trainers and mixed formats entered the picture. Same programme, different experience. Small inconsistencies, extra follow-up, and a lot of “can you send me that thing again?”

We helped D&AD turn great sessions into a more dependable system. A way for trainers to plan and deliver with consistency, handle predictable group dynamics without fuss, and close in a way that makes follow-through more likely.

D&AD

Learning Behaviour Build

On paper, the D&AD's masterclass programme was working.

The masterclasses' were well attended. The trainers were the well known experts. People left energised, notebooks full, feedback forms saying the right things.

Then Monday happened.

Not the mythical Monday where everyone has time to “embed learnings”. The normal one. Back to back calls. An inbox that breeds overnight. The vague feeling you heard something genuinely useful last week, but you could not quite tell your team what it was, let alone use it.

For the programme team, that Monday showed up in a familiar pattern. People asking for “that template”. Requests for a refresher. Trainers teaching the same topic in noticeably different ways. Multi-trainer days landing well in parts, then sagging in the joins.

The delivery was good, then variable, then harder to package as the programme scaled.

That variability has a cost. It creates rework and extra support. It also creates a quiet reputational wobble. Nothing dramatic. Just enough to make a premium programme feel less dependable than it should.

So the real job was not “make the sessions nicer”.

It was to make the outcomes more reliable, even when delivery is busy, multi-trainer, and happening in real rooms with real humans.

We built a system the trainers and programme team could actually run

It had a simple logic:

First, design the content as a balanced learning experience.

Then, design the delivery for human behaviour.

The trainer pack makes this practical. It gives trainers a clear planning flow: map the learning journey, check the FORGES balance, then scan the behaviour cards for the dynamics that could trip up your session.

Design for balanced learning content with FORGES

Most expert-led sessions drift into one mode: information delivery. It's from habit, time pressure and the curse of being an expert.

FORGES gave trainers a fast way to spot that drift and correct it without turning planning into a second job.

In practice, it looked like this: a trainer lays out their session and realises it is mostly one long stretch of input. The cards nudge trainers to mix up their delivery. To make sure each learning hen move people into discussion or practice so the learning has somewhere to land.

So instead of “tell, tell, tell, questions at the end”, sessions found a steadier rhythm. A short input with a purpose. A shift into Group or Experiment. A tangible output people could take back to work.

Design for humans in the room with the behaviour cards

Even a well-designed session can fall apart if the facilitator doesn't know how to navigate behaviour. The good ones and the not so useful ones!

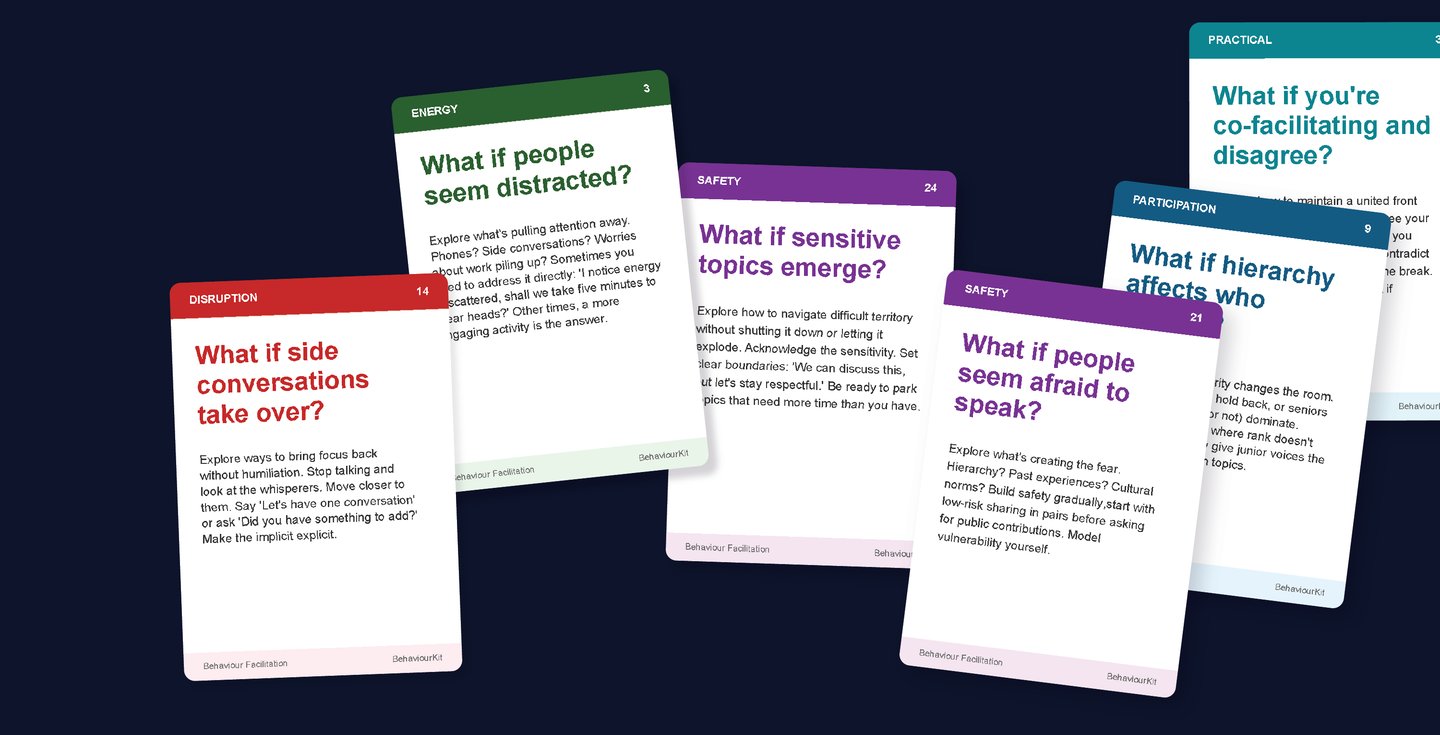

This is where the behaviour cards did their job. They gave trainers simple, in-the-moment moves for the predictable problems that turn a good plan into a long afternoon.

Take the “early birds dominate” moment, where the fastest voices fill the space and everyone else disappears. The card offers a clean move: write before you speak. It gives quieter thinkers time to form ideas before the quick talkers take the mic.

These are small interventions. That is the point. They are the sort of thing you can actually do while you are holding a room, with a flipchart marker that has already dried out.

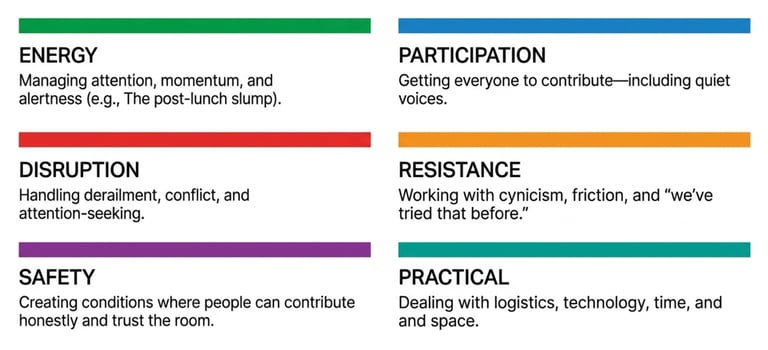

We sorted the behaviour cards into the main dimensions for easy selection.

The biggest shift was reliability.

Trainers planned with a shared method and a shared language, without being forced into a single “approved” style. The pack guided preparation, delivery, and follow-through, and fed what worked back into the content lifecycle so sessions improved over time rather than resetting with each trainer.

Multi-trainer days became easier to hold together because the programme could define energy, transitions, and outputs more consistently, rather than relying on individual trainer instincts to do the glue work.

And because the Transfer Loop pushed attention beyond the room, trainers strengthened the close: one thing participants will do differently, when they will do it, who can help, and a commitment said out loud.

“I’ve run workshops for years, but this gave me a new lens on learning behaviours.”

Jim Sutherland, Studio Sutherland

“The cards are brilliant. Perfect for both training and client workshops.”

Alex Lampe, Wiedemann Lampe

Go deeper with our Human AI Performance series:

View more work

© 2026, BehaviourStudio All rights reserved. Behaviour Thinking is a registered trademark of BehaviourStudio.